Adding Phaser Hand-Gestures to the HTML Mobile Browser Game

Hello persons, one of my persistent-universe online single/coop-player game's target platforms is the mobile browser. I want players to be able to check their games from their mobile phones at any time and any place. It is thus necessary to support hand-gestures for the mobile HTML view. Panning, Pinching, and Tapping are required for minimal interaction for the basic gameplay. After some adventures and DevTools performance profiling I got it working properly.

It is my first time trying to achieve this with Phaser. Already have I noticed the Rex Phaser Notes repository with its one and two finger gestures support. Following the not-reinventing-the-wheel philosophy I was quick to try Rex's code for my game. Here is a link to the Rex's Phaser plugin's page: https://rexrainbow.github.io/phaser3-rex-notes/docs/site/gesture-overview/

After following the instructions and writing some rudimentary code, I managed to have my pan and pinch gesture working, at least to some extent. I then continued to refactoring all my pointerdown events to tap gestures recognized by the plugin.

Aside: if I was to keep the pointerdown events, panning was not possible, as the pointerdown is fired instantly and you need some logic to identify if the player is panning or tapping. This is where the plugin comes to play. Without the plugin, in the galaxy screen for example, trying to pan the scene by placing my finger on a Star sprite would enter start up the star system scene instead of initiating a pan action.

I also found a great way, or maybe the way to test my game on a real phone with the development version running on localhost, using Chrome. It's not the scope of this post to describe that and it would be mostly citations of the guide I followed to get this working, so I'll skip that.

So I launched the game on my mobile browsers, panned and zoomed happily, tapped a star to enter a star system and... whoa. It took some time to switch to the star system scene. Too much time for a normal tapping flow. Things were lagging. Going back to the plugin's documentation I saw time and tapInterval and tried combinations of those but it seemed they had no effect. Frustrated with hippie-open-source libraries such as this I decided to roll up my own minimal gesture implementation and remove the dependency on Rex's plugin (spoiler: I do use it, read on).

Excited to write real code instead of being a plumber-programmer I wrote added Jest to my frontend project, wrote some tests, designed some abstract class for tracking pointerdowns and keeping track of things etc. etc. etc.

I got all the tests passing and the tap gesture working and I integrated the new code to the real game code, tapped the star on the galaxy scene and expected to instantly get into the star system scene.

Same lag 🤦

(I went to sleep miserable and cold at this point).

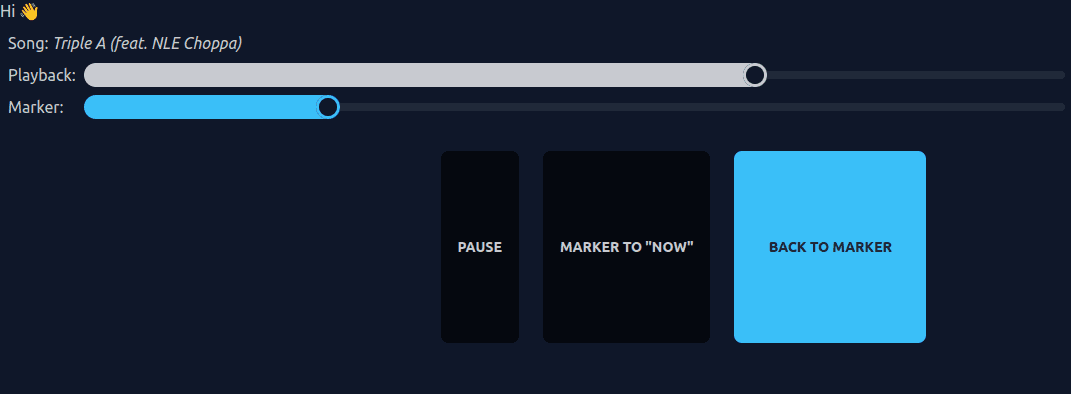

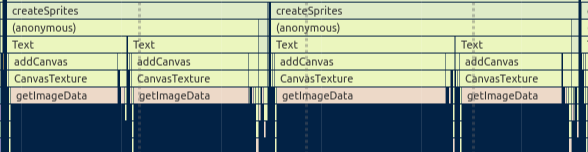

Next time I had a development session for the game I roilled up my sleeves and fired up the DevTools' profiler. I entered and exited the star about four times to see if I can find a repeating performance-issue pattern and guess what, I did find the issue.

In the image, the createSprites method is part of a PlanetGameObject code that handles a planet that is shown in in the star system. It creates the planet sprite itself and some texts around it (a label and available resources at the moment). We see two planets' createSprites calls, where each has two texts which take quite some time to render 😱. That's the bottleneck I was facing! Problem found.

Following a short chatter on Phaser's Discord, I asked about regular font rendering vs. bitmap font rendering and whether the latter might fix the performance hit. However, I was suggested another way. Using the DOM.

:thinking:

I was already using the DOM for the UI things such as globally available resources and a build panel listing the current builds being processed, so I had some framework of rendering DOM onto the Phaser canvas somehow. So why not use it for rendering in-game UI as well, such as my planets' statistics?

I removed Phaser's text rendering and added DOM Texts for each planet in the system at a random position on the screen. It is first wise to check if this solves the performance problem before getting into positioning the DOM elements on the planets themselves (with regard to the current pan and zoom camera setting).

And, no performance problems! Tapping on a star in the galaxy fired up the solar system scene without lagging. Moreover, panning while placing my finger on a star in the galaxy scene initiate a panning action, so pan and tap gave the two different logics I needed.

I'll show up some code for positioning the texts on the screen as they work currently. In Phaser 3, there's no world-to-screen-point function for some reason and this repeating question in Discord seems to have a not-so-complicated solution that I luckily managed to achieve.

WARNING: UGLY CODE AHEAD.

Don't ask why, I'm currently using MobX and React for the DOM UI. Bear with me.

setOffsetsFromCamera(camera: Phaser.Cameras.Scene2D.Camera): void {

const { worldView } = camera;

this.sceneOffsetX =

(-worldView.left - (worldView.width * camera.zoom) / 2) * camera.zoom;

this.sceneOffsetY =

(-worldView.top - (worldView.height * camera.zoom) / 2) * camera.zoom;

this.sceneZoom = camera.zoom;

}

Inside a MobX store intended for keeping stuff about the UI I have this function. When I pan/pinch/things I call this with the Phaser camera instance. What's going on is that I try to calculate the offset in pixels that I need to transform the real world DOM by.

Now for the React part:

const InGameDOM = observer((): JSX.Element => {

const translateX = gameUIStore.sceneOffsetX + "px";

const translateY = gameUIStore.sceneOffsetY + "px";

return (

<Box

position="fixed"

top={0}

left={0}

transform={`translate(${translateX}, ${translateY}) scale(${gameUIStore.sceneZoom})`}

>

{gameUIStore.inGameElements.map(({ element, x, y, key }) => {

return (

<Box position={"absolute"} left={x} top={y} key={key}>

{element}

</Box>

);

})}

</Box>

);

});

The Box thing is from "Charka UI" which I was giving a test-run. The important part is the transform I'm using to translate and scale the in-game DOM using CSS. I will probably have to do something about the scaling in the future as I probably do not wish to have the in-game texts scaled as well while pinch-zooming.

This method works for now, forgive me for not analyzing exactly what I did here but maybe these snippets might help you as a starting point for some world-screen-point code.

Another final thing I did not implement yet and the Rex plugin does not address. When you pinch-zoom, for example in a map app, the point between your two fingers is sort of 'focused' on and the pinch 'zooms' there. The plugin's pinch functionality just zoom to the center of the screen regardless of your fingers' positions. Well, it's not the plugin to blame, it's the code that handles the pinch. The plugin's example also has this defect and I'll fix it some day, but for now I decided to squash some more bugs and do some pre-pre-alpha balancing before handing the game so some friends for initial testing(!).

Thanks, bye.